Product Design

[CLOSET]

ROleUX · UI · Product · Research

ToolsFigma · Claude Code · Unity

statusIn Progress

Timeline3 months

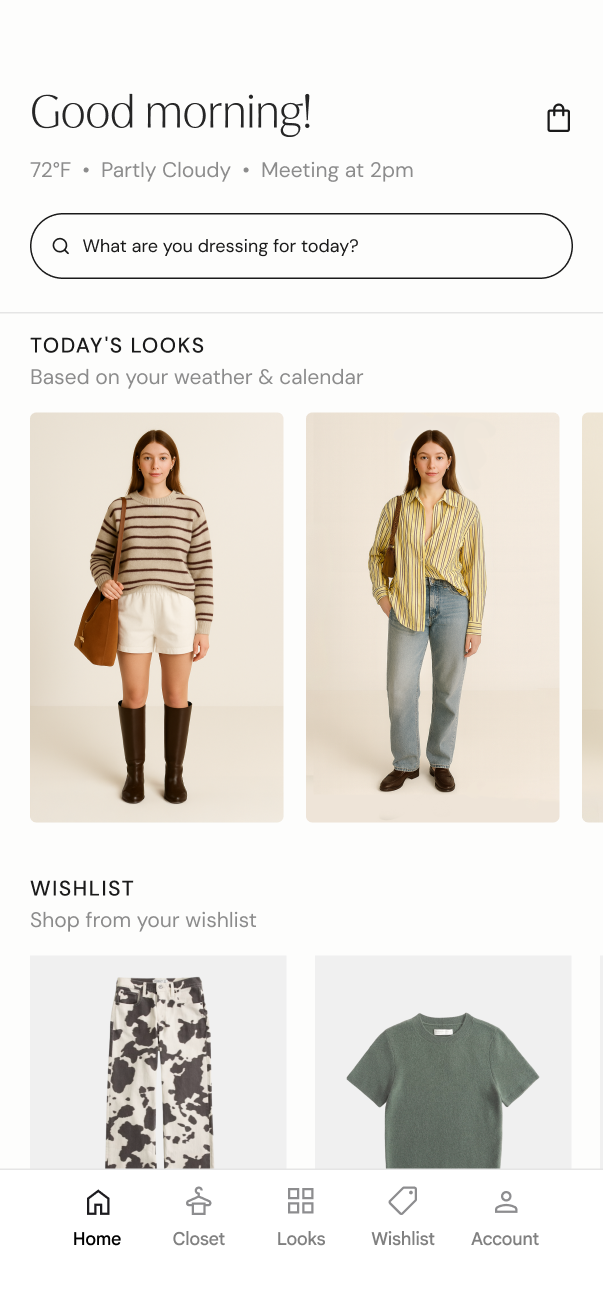

OverviewRethinking the Morning Ritual

Getting dressed should be fun. Instead, it often becomes one of the most frustrating parts of the day. You stand in front of a full closet with nothing to wear.

The tools we have today weren't designed for this moment. The real moment of need is 7am, in your bedroom, in front of your own mirror, where getting dressed is still manual and driven by guesswork.

What if your mirror knew your wardrobe as well as Spotify knows your music?

Six friction points

every morning

Decision Fatigue

Too many choices first thing in the morning with no system to reduce cognitive load.

Context Blindness

Existing tools don't know your weather, calendar, or what you wore yesterday.

The "Almost There" Problem

Users can sense an outfit is incomplete but can't identify what's missing.

Closet Blindness

A full wardrobe still feels like nothing to wear when you don't know how to style it.

Reactive Shopping

Users purchase impulsively rather than filling specific gaps in their wardrobe.

Personalization Gap

Most digital experiences are personalized, yet fashion decisions remain user-driven and manual.

Where the solution lives

Personal AI Stylist

AI that understands your weather, calendar, and taste, making recommendations that are actually relevant.

Built for the Moment

The bedroom mirror, not the fitting room or app, is where the real moment of stress happens.

Unlock What You Own

Most people wear a fraction of what they own. The opportunity is unlocking what's already there.

TrueStyle is your AI-powered mirror and style companion

TrueStyle exists for the moments when getting dressed becomes a battle. Standing in front of a full closet, certain you have nothing to wear. That deserves better than guesswork.

We're building the tool that should have always existed: a mirror that knows your wardrobe and style goals. Not just what you own, but how it works together and how to make it work harder.

Because the real failure isn't buying the wrong thing.

It's never figuring out how to wear it.

Market opportunity for personalized styling

The market is projected to grow by about 173% from 2024 to 2032.

The market for personalized styling is expanding fast, driven by AI, rising consumer expectations, and a growing gap between the clothes people own and the outfits they actually wear.

Source: Future Data Stats, Online Personal Styling Services Market Report

03 — Defining the FlowMapping a product

with no playbook

This was a novel product, existing mirrors like Lululemon and Peloton weren't built for trying on clothing, so there was no direct precedent to benchmark against. I started from the user's goal instead, mapping the flow from there. That part was relatively straightforward.

The first challenge was figuring out how users would navigate without a touchscreen. I wanted it to feel intuitive and seamless, using only four gestures so users wouldn't be overwhelmed trying to memorize them.

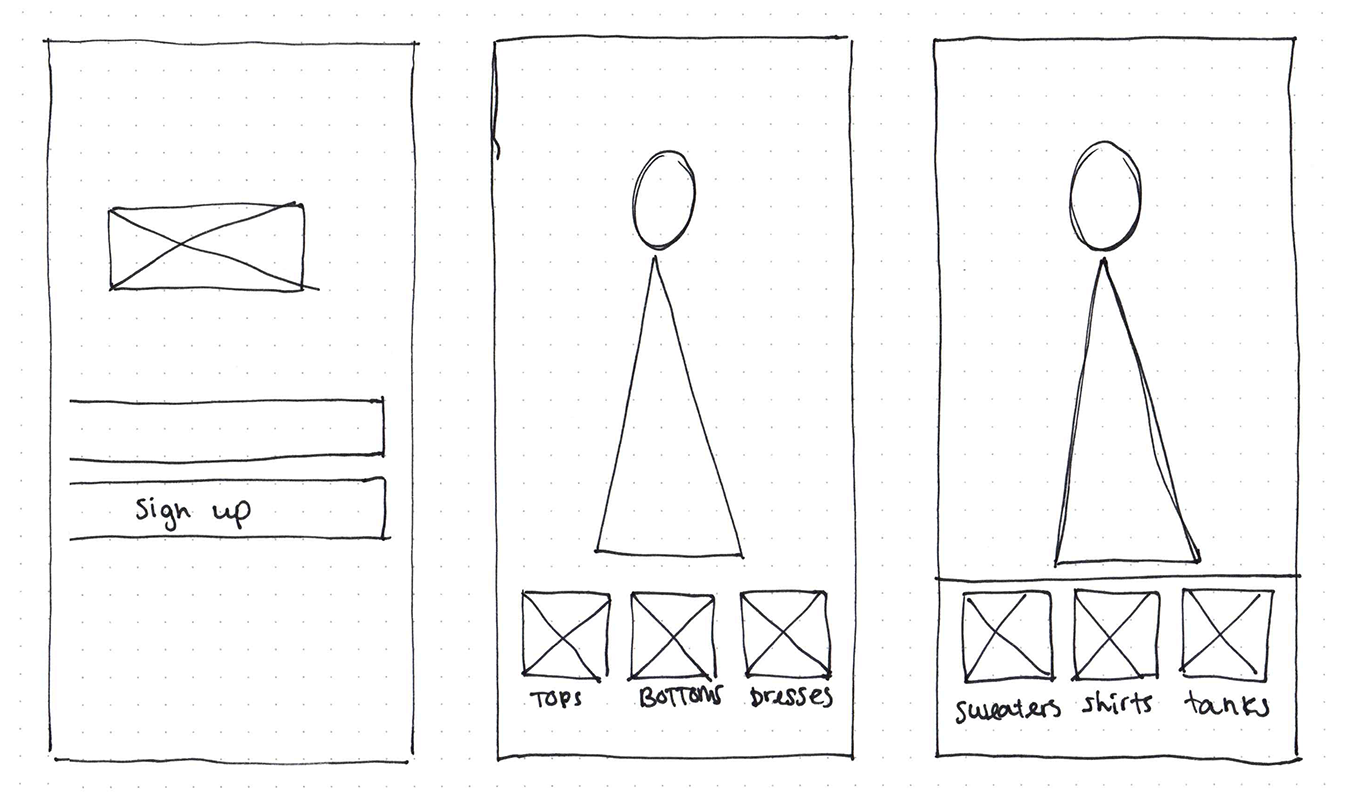

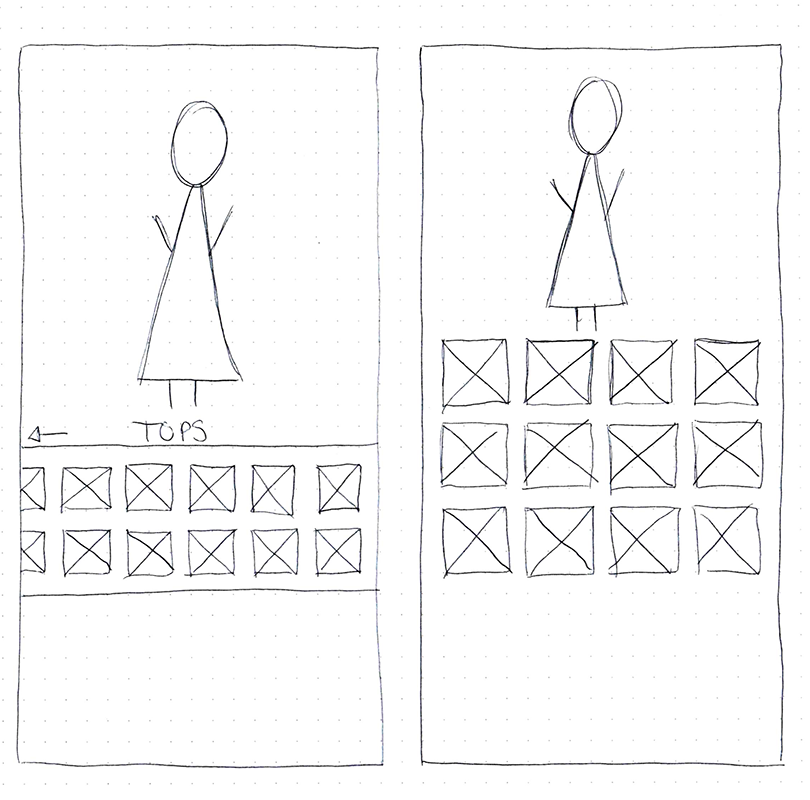

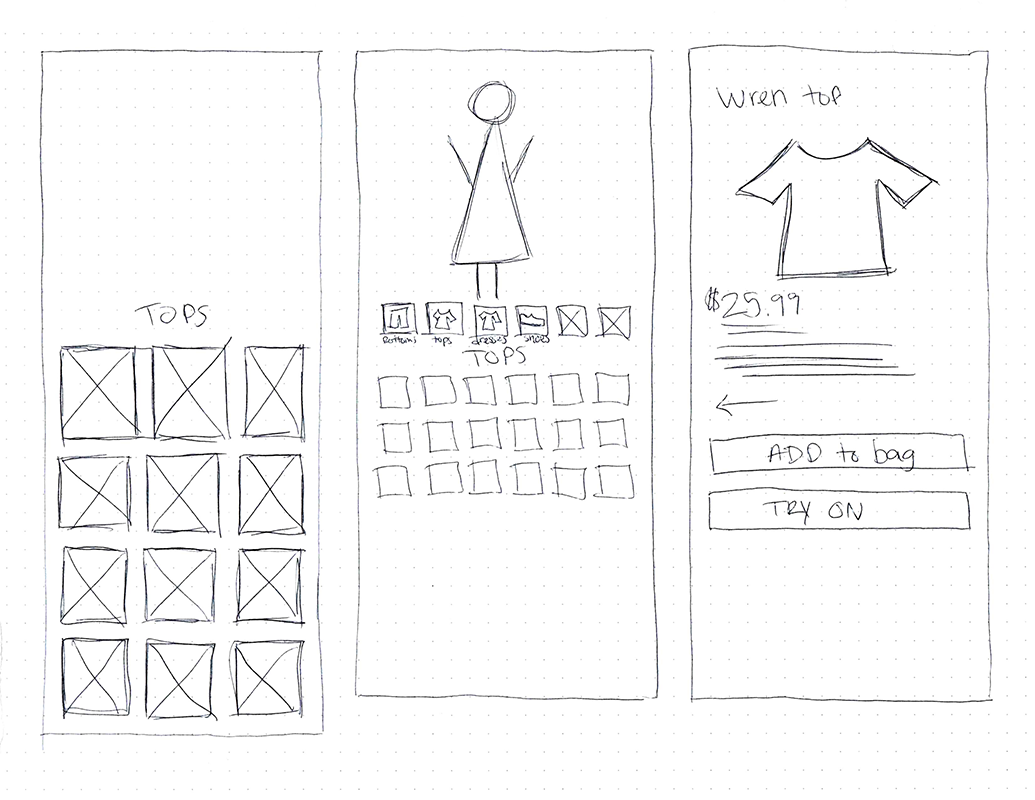

Low fidelity sketches

AI Generated Wireframes

I wanted to explore whether AI could accelerate the wireframing process or come up with ideas I hadn't considered. I fed Figma Make my full concept alongside an initial screen and asked it to help generate UI for an in-home AR fashion mirror. The results missed the mark. The output looked like a standard desktop browser or dashboard, not something designed for a mirror. Nothing it produced reflected the unique constraints of a mirror-based interface. I realized this was because AI simply doesn't have enough smart mirror or AR interface examples to draw from. This was genuinely new territory, and it confirmed that I was on my own.

Four gestures.

Everything else falls away.

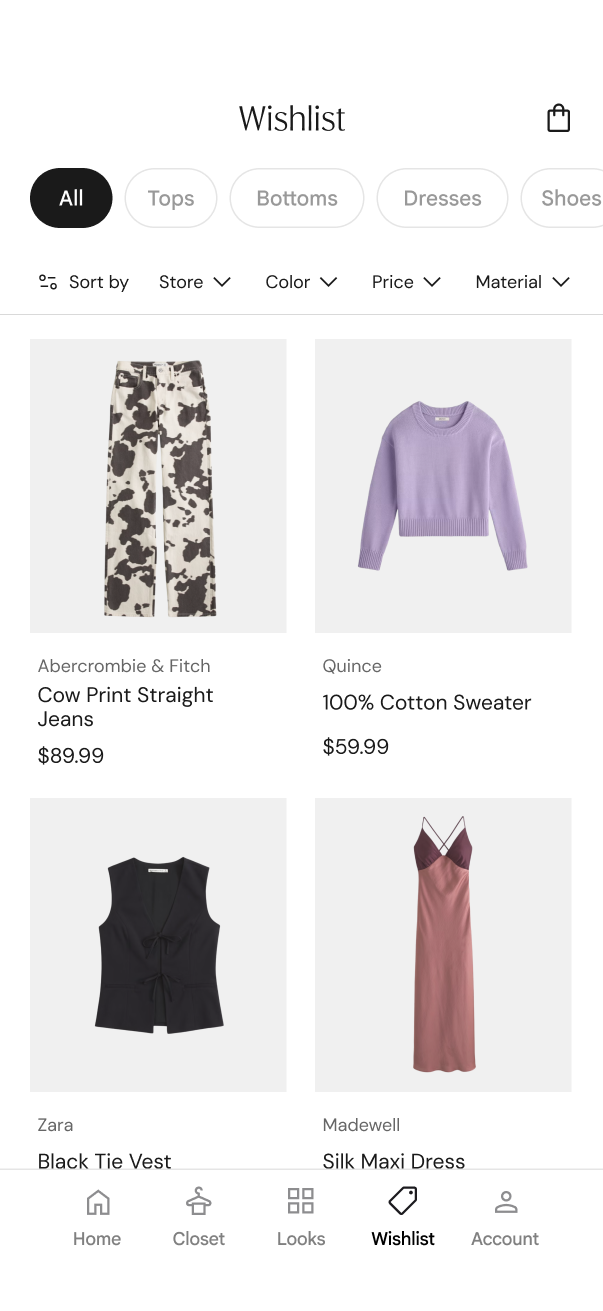

With gesture navigation in mind, I initially kept all interactions on the right side so users can scroll up and down or move back and forward through selections. Having controls split across both sides would break that entirely. Centering everything on one side is what makes the four-gesture system possible. When they find something they want, a swipe right saves it to their wish list on the mobile app.

Swipe Up

Scroll up through outfit options

Swipe Down

Scroll down through outfit options

Swipe Left

Go back

Swipe Right

Make a selection / Save to wish list on mobile app

05 — The PivotGestures taught me the principles.

Voice delivered them.

The four-gesture system worked logically, but logic isn't everything. To get to a specific outfit category, users had to swipe through multiple layers of navigation. Voice lets someone say exactly what they want and skip the extra steps entirely. The interaction model stayed simple; the path to the result got dramatically shorter.

Gesture-first navigation

With no direct precedent for a styling mirror, I designed a four-gesture system: swipe up/down to browse, left to go back, right to save.

Four steps to get when you're going

Scenario walkthroughs revealed the core tension: gesture navigation forced users through layered menus to reach what they wanted. Someone who already knows they want a casual Friday look shouldn't have to swipe through four screens to get there.

Same principles, different modality

The gesture system's core strengths: minimal commands, low cognitive load, fast navigation, translated directly to voice. The principles didn't change; the input channel did.

Reduced Cognitive Load

Voice removes the decision of which gesture to use. Users speak their intent directly, no mapping between action and meaning.

Faster Idea → Outfit

Saying "show me something casual" is faster than swiping through categories. Voice collapses multiple navigation steps into a single request.

No System to Learn

Gestures require learning a structure, which direction does what. Voice commands are self-documenting. Users already know the language.

Direct Access

Instead of navigating four layers deep to reach a category, users just ask. "Show me work outfits" bypasses the entire menu structure in a single statement.

The best interaction model is one you don’t notice. Voice lets the mirror get out of the way so the user can focus on what matters, how they look and feel.

06 — accessibilityVoice-First, Not Voice-Only

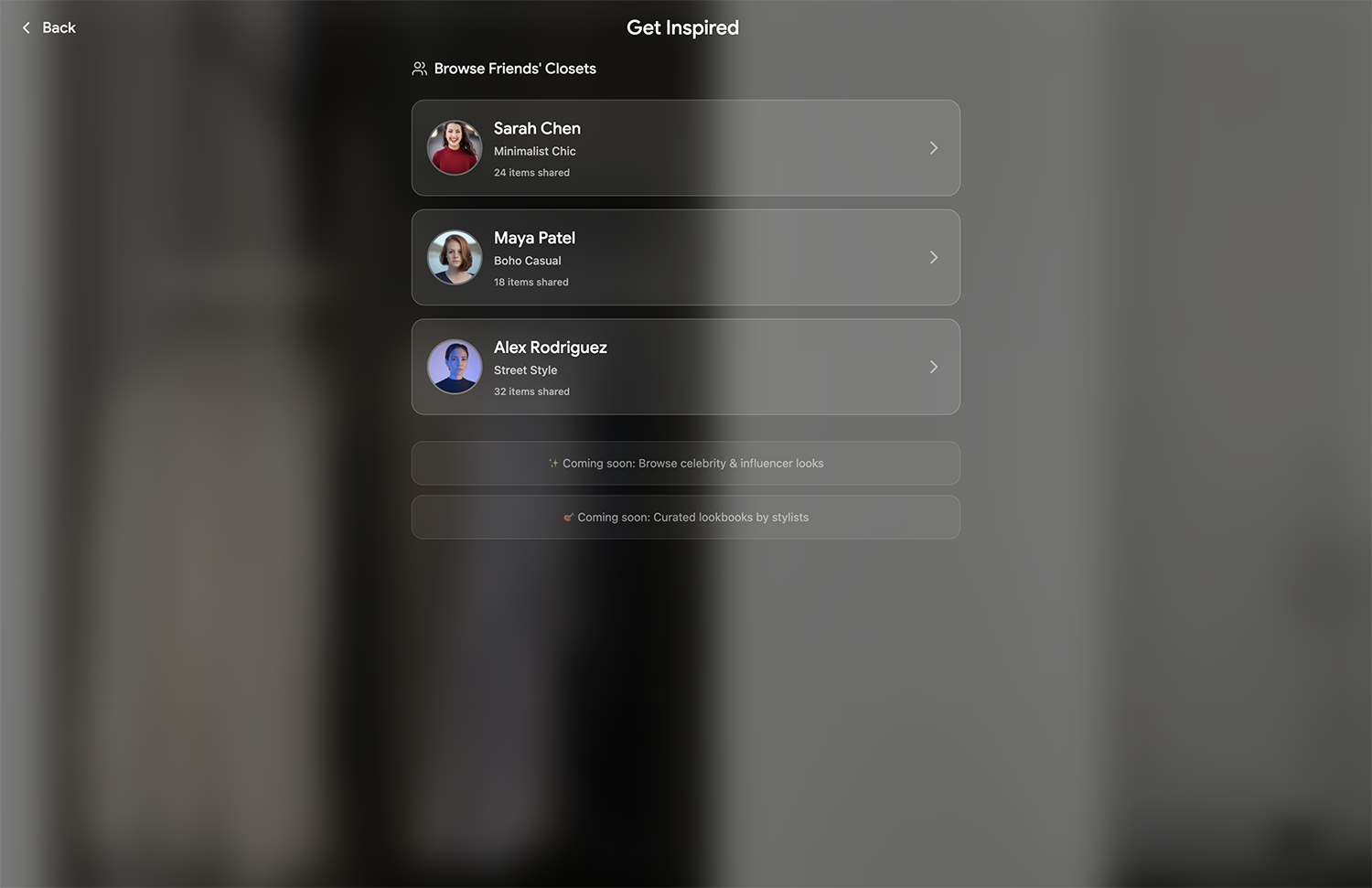

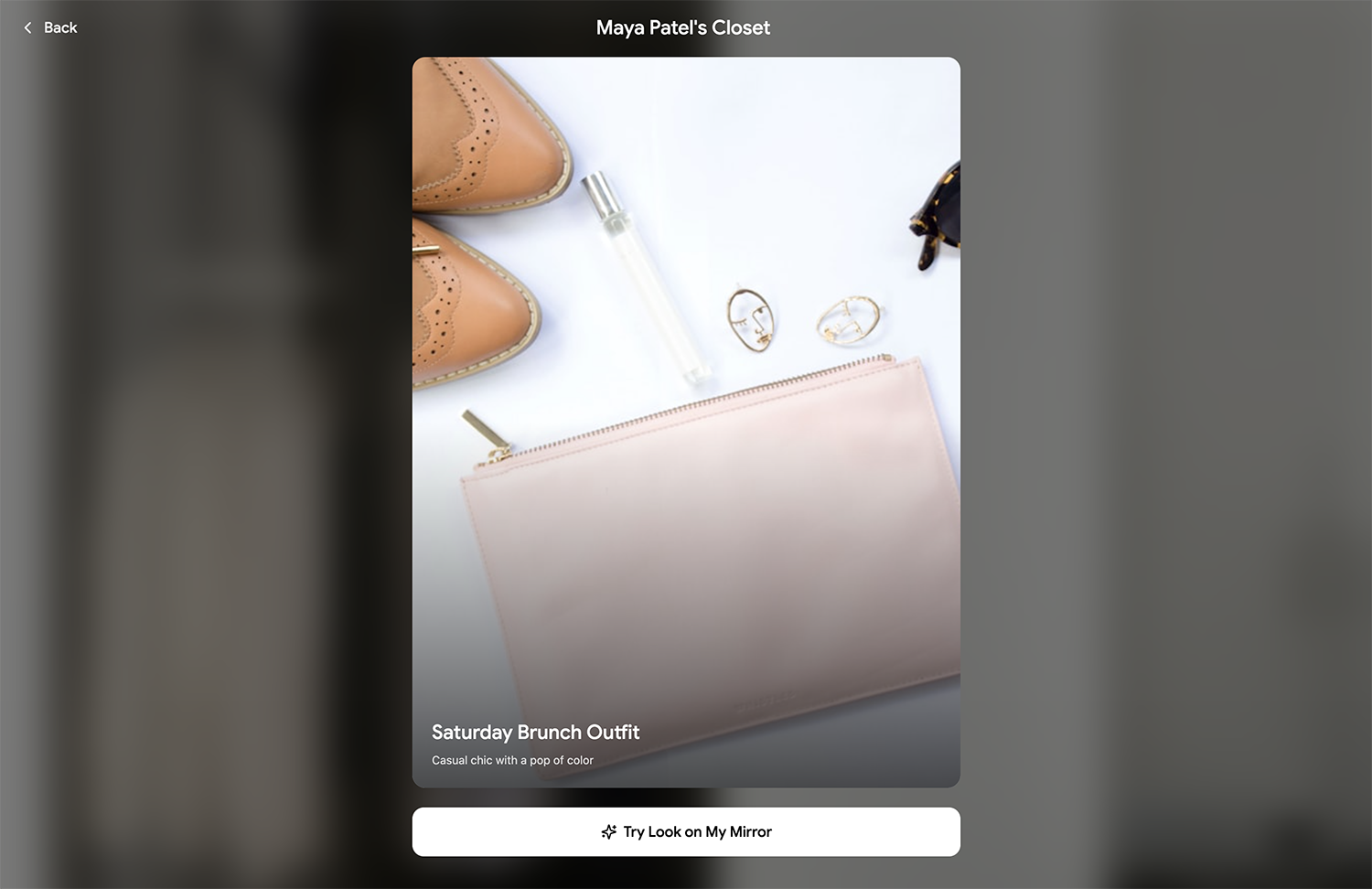

Not everyone can, or wants to, talk to their mirror. The companion app works as a remote control, with full navigation and a text-based AI stylist for users who prefer typing over speaking.

Three ways to interact

Voice is the fastest path, but it's never the only one. The system supports three input modes that cover different contexts and accessibility needs.

Voice Commands

The primary input. Speak naturally to browse, save, and restyle.

Mobile App Remote

The companion app doubles as a remote control. Browse, navigate, and swipe through outfits from your phone, for users who prefer not to speak or are in a shared space.

Text-Based AI Stylist

Type to the AI stylist directly in the app. Ask for outfit ideas, describe what you're in the mood for, or request specific combinations, all without saying a word.

07 — The OutcomeWhat TrueStyle delivers

Four core features, together they turn the mirror from a passive surface into an active styling partner.

AI Styling

A learning model that studies your style goals over time — not just what you wear, but how you want to dress. The more you use it, the sharper the suggestions get.

My Closet

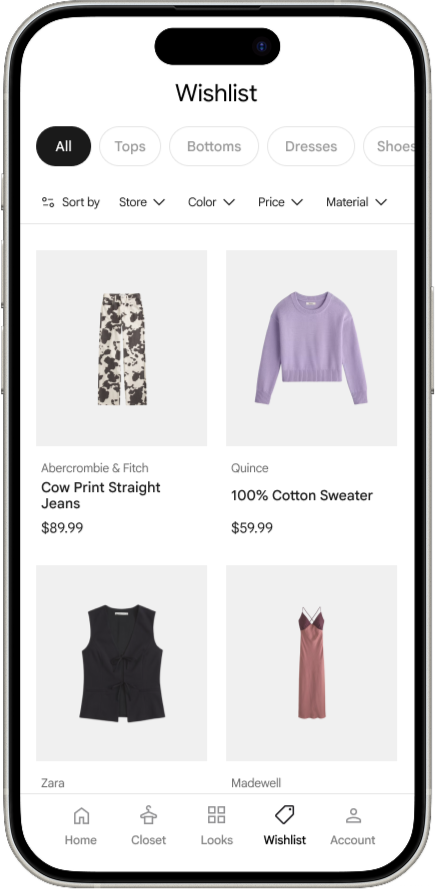

Your full wardrobe, digitized and browsable. Every piece cataloged, tagged, and ready to be styled — so nothing gets forgotten at the back of the rack.

Virtual Try-On

See outfits on your mirror avatar before committing. Evaluate fit, proportion, and pairings without changing clothes.

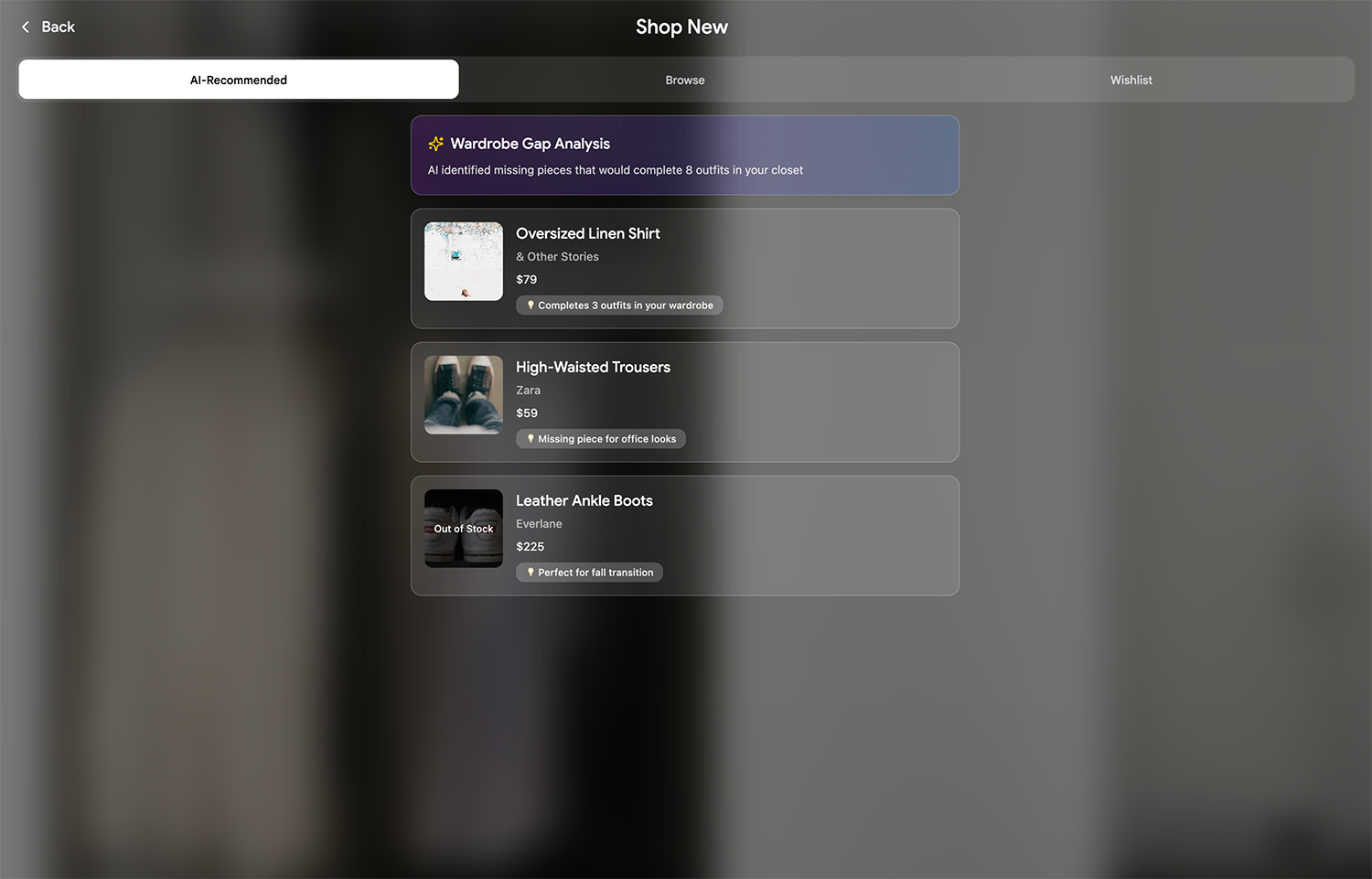

Smart Shopping

Gap analysis meets capsule wardrobe building. The mirror identifies what's missing and recommends versatile pieces that work with what you already own — fewer items, more outfits.

How it actually works.

My TrueStyle prototype runs on a vertical TV, a Kinect v2 camera, and Unity. Turning off-the-shelf hardware into an AR styling mirror. Here's the system broken down.

Body Tracking

The Kinect reads your skeleton: 25 joints, updated every frame, and streams that data to Unity over UDP. The system knows where your shoulders, spine, and hips are in 3D space, which is how clothing lands in the right spot.

Clothing Overlay

Tops anchor to your shoulder and spine joints. Bottoms anchor to your hips. Each piece scales dynamically based on your body's depth, step closer and the clothing gets larger, step back and it shrinks. Smoothed with interpolation so nothing jitters.

Gesture & Voice Input

Swipe your right hand to browse tops, left hand for bottoms. The system tracks wrist velocity and displacement to distinguish intentional swipes from casual movement. A cooldown prevents the return motion from triggering a second swipe.

Wardrobe System

Outfits are organized into tops and bottoms arrays, each with properties for texture, gender tag, vertical offset, and aspect ratio. A carousel shows semi-transparent previews of the previous and next items on either side of the current selection.

Screen Flow

A four-state system manages the experience from launch to idle. Startup fades in the logo, then cross-fades to gender selection. The mirror state is the live AR view. If nobody's tracked for a set period, the mirror drops to an idle loop and resets when someone steps back in.

Audio

Swipe sounds use PlayOneShot so rapid outfit changes don't cut each other off, each swipe gets its own audio instance. The startup screen has a dedicated audio source that fades out as the experience begins.